The 2026 Stack: Engineering a Personal Ecosystem

It has been about five years since I wrote about my first SFF build in the Ghost S1. At the time, I was just happy to fit a decent GPU into a tiny case without it melting. Looking at my desk in 2026, the tech itch has only grown. My setup has evolved from a single gaming rig into a full-blown personal data center.

Here is a look at what I am running now and the projects that are actually making use of all this overhead.

The Desk Setup: Eliminating Friction

The biggest challenge with my current setup was the annoyance of switching between my personal MacBook Pro and my gaming rig. I have spent a lot of time lately thinking about user friction, even when the user is just me sitting at my desk.

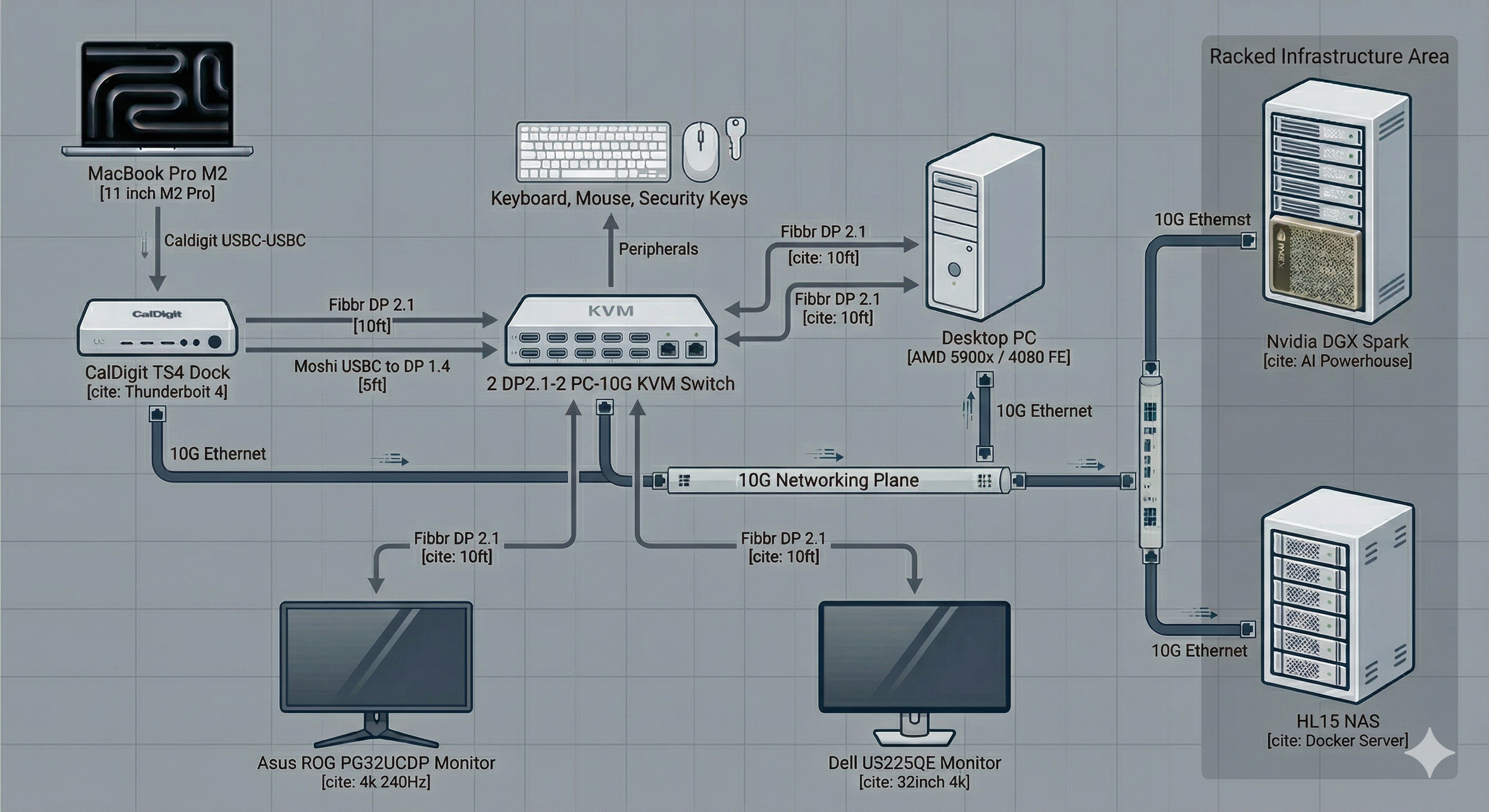

I finally solved this with a Level1Techs DisplayPort 2.1 KVM. This is the brain that allows me to use one keyboard and mouse across both machines without thinking about it.

The Displays: I am running an Asus ROG PG32UCDP at 4K 240Hz and an LG 38WN95C at 144Hz. To keep those refresh rates stable, I am using Fibrr DP 2.1 and Moshi USB-C to DP 1.4 cables.

The Mac Side: Everything from my MacBook goes into a CalDigit TS4 dock. One cable connects to the laptop, and the dock handles the connection to the KVM.

The PC Side: My main rig stays tucked away. It is powered by a Ryzen 5900x and an RTX 4080FE. It is linked to the KVM with high-bandwidth cables to ensure I am not losing any performance during the switch.

Moving the Noise: The Basement Rack

A lot of the hardware I used to keep on my desk has moved to a server rack in the basement. This is better for my focus and the temperature in my study.

HL15 NAS: This is my primary storage node running Unraid. It has an Intel Xeon Gold 6230R and 84TB of raw storage. It handles my Plex library and acts as the data lake for my AI experiments.

Nvidia DGX Spark: This is tucked away in the rack as well. It is a dedicated environment for LLM research. This is where I spend most of my free time these days.

Networking: The core of the house is Ubiquiti Unifi. I have a UDMSE, a USW Aggregation switch, and a USP PDU Pro all racked downstairs. Everything is tied together with a 10G backplane so that moving large datasets between the basement and my desk feels fast.

AI Experimentation: LLMforge and Tintin

Lately, I have been moving away from using AI tools to actually building with them. This is where my love for deep-diving into niche subjects meets the technical side of my brain.

LLMforge-Nemotron

I have been working with a project called LLMforge-Nemotron. This is an experimental pipeline based on NVIDIA’s open recipes. The goal is to take general-purpose models and forge them into specialized tools using my local hardware.

I use Parameter-Efficient Fine-Tuning and LoRA to update model weights for specific tasks. I spend a lot of time evaluating different model sizes. It is a classic product problem where I have to decide if I use a massive model for better accuracy or a smaller, faster one that gives me a better return on inference speed. The pipeline also generates high-quality synthetic data to help teach the models new tricks.

The Tintin Project

The most practical application of this is my Tintin Project. It is an AI-powered system that indexes the Adventures of Tintin episodes. I built a pipeline that turns raw video into searchable knowledge using multimodal embeddings and RAG. Now, I can ask the system a question like where is Captain Haddock frustrated and it returns the exact timestamps. This is about taking unstructured data and turning it into something useful.

Observability: Not Flying Blind

When I am running a fine-tuning job on the Spark or the 4080, I need to know exactly how the hardware is behaving. I put together a custom Prometheus and Grafana monitoring stack specifically for these AI workloads. It tracks VRAM usage, power draw, and thermals in real-time. This turns the black box of AI training into a transparent process where I can see exactly where the bottlenecks are.

Final Thoughts

My 2026 stack is a long way from that Ghost S1 build, but the core motivation is the same. It is about building a space where I can explore things that interest me without the technology getting in the way. By treating my home lab like a product and focusing on observability, I have ended up with a setup that is as fun to manage as it is to use.